Analysis Service Model Developer's Guide

Introduction

In a general sense, a "model" is just a piece of software that consumes some input data, performs some computation, and produces some output data. It may rely on third-party software libraries to provide some portion of its functionality, and might rely on static data files for purposes such as storing model parameters.

This guide gives a high-level introduction to the core concepts involved with developing models for the Analysis Services environment. For more in-depth guidance on developing models in the supported programming languages, please refer to a language-specific model development guide:

- Model Developer's Guide: Python Models

- Model Developer's Guide: R Models

"Dockerised" Models

Within the Analysis Services environment, we allow any user to upload a model and run it over the data stored in the SensorCloud. This gives our users the powerful ability to develop and apply custom analytics to their data, but providing this ability requires overcoming a number of significant challenges:

- How can we ensure that malicious or badly-written model code doesn't compromise any data or our computing infrastructure?

- How can we ensure that all the dependencies for each model are always available whenever the model is run?

- How do we deal with conflicting dependencies for different models (e.g. different library versions)?

- How can we effectively scale our computing requirements as more models are added to the catalogue?

Within the Analysis Services environment, all of these challenges are addressed using a software platform known as Docker. A detailed understanding of Docker is not required to effectively utilise the Analysis Services API, but a little background knowledge is useful to better understand how models are implemented within our environment. The following is a very brief overview of Docker, and how it is used in the Analysis Services environment.

In short, Docker allows for the encapsulation of model code, data files, and dependencies into coherent packages known as "images", and then allows for running those images within isolated environments known as "containers". This is very similar to how more traditional "virtual machines" work, but with one important difference - in the case of a virtual machine, the code runs within an emulated or "virtualised" environment that simulates running on a real machine, whereas a Docker container runs as an isolated process within the host operating system's kernel. As such, since a container runs on the same real hardware as the host operating system, it doesn't incur any emulation or virtualization overhead, and is therefore more performant.

A Docker image is a set of files that package together the model's code, data files and dependencies, and which specify the runtime environment that the model expects. These images are built in layers (each of which is in itself a image), the first layer being a "base image" which forms the foundation upon which the other layers are added. Subsequent layers can add the model's files or dependencies to the image, and can configure the model's runtime environment.

Once the image is built, it is stored in a Docker "registry", from which it can be retrieved whenever the model needs to be run. Running the model then consists of retrieving the image from the registry, installing it onto a host machine that is running Docker, and creating a container from the image. Creating the container generates a new dedicated and isolated environment for the model to run in, and then performs the actual model execution.

Using this approach allows us to address the challenges outlined above:

- By running models within an isolated container, they are unable to interfere with the host (or, in fact, anything outside the container), except where explicitly permitted by the host (more on that in the "Running Models" section).

- Since the model's image contains its dependencies, they are always available so long as the image remains available.

- Since the model's dependencies are contained in its image (and its image alone), they cannot interfere with dependencies required by other models.

- Computational load can be managed by having many machines with Docker installed, allowing model execution to be spread across a pool of machines.

Although Docker forms the underlying mechanism for describing and executing models, we try to hide as much of that complexity as possible from the users from the system. The remainder of this guide describes how the Analysis Services system works from an end-user perspective, tying back to the underlying Docker implementation only as much as is necessary.

Base Images

As alluded to in the previous section, a Docker image is built out of a number of layers, starting with a "base" layer (also known as a base "image", as all layers are actually images in their own right). This layer forms the foundation upon which the rest of the image is built, and usually provides an implementation of essential components that are needed for the following layers to function. As a concrete example, the Analysis Services system includes a number of base images for developing Python-based models, each of which contain a minimal operating system, a Python interpreter, and a number of standard Python libraries. With these already provided, all that's left for the model author to provide is their own specific Python code, any data files needed by that code, and a list of other Python libraries required to run the code.

For security reasons, we don't allow users to supply their own base images. Instead, we host a number of pre-built base images that have been vetted for security, and which have had a number of dependencies pre-installed for convenience. A list of these base images can be retrieved by issuing a GET request to the /base-images endpoint of the Analysis Services API. This returns a HAL-encoded list of the available base images, for example:

{

"skip": 0,

"limit": 1000,

"count": 2,

"totalcount": 2,

"_links": {

"self": {

"href": "https://senaps.eratos.com/api/analysis/base-images/?skip=0&limit=1000"

}

},

"_embedded": {

"baseImages": [

{

"id": "1132000a-8b05-4229-8cdc-a7b3bd8fa511",

"name": "Python 3 Base Image",

"runtimetype": "PYTHON3",

"tags": [

"python",

"python3",

"numpy",

"pandas"

],

"_links": {

"self": {

"href": "https://senaps.eratos.com/api/analysis/base-images/1132000a-8b05-4229-8cdc-a7b3bd8fa511"

}

}

},

{

"id": "15e283e0-7406-47b9-92ce-9ffab9123db1",

"name": "Python 3 StatsModels",

"runtimetype": "PYTHON3",

"tags": [

"python",

"python3",

"numpy",

"pandas",

"statsmodels"

],

"_links": {

"self": {

"href": "https://senaps.eratos.com/api/analysis/base-images/15e283e0-7406-47b9-92ce-9ffab9123db1"

}

}

}

]

}

}Pay particular note to the base image ID - this is what is needed to obtain detailed information about a base image, or to use it as a base for a new model (more on that in the section "Installing Models").

Detailed information about a base image can be obtained by issuing a GET request to the /base-images/<image-id> endpoint, substituting the image ID of interest for the <image-id> placeholder. This returns a HAL-encoded representation of the base image itself. For example, the following is the response for /base-images/1132000a-8b05-4229-8cdc-a7b3bd8fa511:

{

"id": "1132000a-8b05-4229-8cdc-a7b3bd8fa511",

"name": "Python 3 Base Image",

"description": "A base image for Python 3 models, running on Ubuntu 16.04. Comes with NumPy, Pandas and netCDF4 pre-installed.",

"runtimetype": "PYTHON3",

"modelroot": "/opt/model/",

"modeluser": "model",

"entrypointtemplate": "python3 -m as_models host /opt/model/{entrypoint}",

"supportedproviders": [

"PIP",

"APT"

],

"hostenvironment": {

"architecture": "X86_64",

"operatingsystem": "LINUX"

},

"tags": [

"python3",

"pandas",

"python",

"numpy"

],

"_links": {

"self": {

"href": "https://dev.senaps.eratos.com/api/analysis/base-images/1132000a-8b05-4229-8cdc-a7b3bd8fa511"

}

}

}The following table summarises the properties of a base image.

Property | Description |

|---|---|

| The base image's ID, used to specify a base image in a model manifest (see "Installing Models") |

| A human-friendly name for the image. |

| A human-friendly description of the image. |

| The runtime type of the models supported by the image (e.g.

|

| The path within the image's filesystem where the model's files will be installed. |

| The name of the user that will be used to execute the model. |

| A template for the command that will be used to execute the model (see "Installing Models"). |

| A list of the package management systems supported by the image for installing additional dependencies. Supported values at present are:

|

| An object describing the host environment the image is designed to run on. This comprises two sub-properties: |

| The hardware architecture of the host system. Supported values at present are:

|

| The operating system of the host system. Supported values at present are:

|

| A list of arbitrary textual "tags" that are useful for categorising base images. |

The Manifest File

A manifest file is used when installing new models, to declare the base image the models are to be built upon, the details of the model implementation, and the dependencies that need to be installed to get the models to run.

The following is a typical manifest file, for a Python-based "multivariate mean" model (see the Model Developer's Guide - Python Models for a full tutorial based on this model):

{

"baseImage": "1132000a-8b05-4229-8cdc-a7b3bd8fa511",

"organisationId": "my_org_id",

"groupIds": [],

"entrypoint": "model.py",

"dependencies": [],

"models": [

{

"id": "multivariate_mean",

"name": "Multivariate Mean",

"version": "0.0.1",

"description": "Computes mean of multiple aligned data streams.",

"method": "Downloads observation data into a Pandas DataFrame, optionally weights each column, computes sum of each row, then divides by the sum of the weights.",

"ports": [

{

"portName": "inputs",

"required": true,

"type": "multistream",

"description": "The streams to be averaged",

"direction": "input"

},

{

"portName": "weights",

"required": false,

"type": "document",

"description": "The weighting of each stream, given as a JSON object of stream_id -> weight pairs. If no weight specified for a stream, weight defaults to 1.0.",

"direction": "input"

},

{

"portName": "output",

"required": true,

"type": "stream",

"description": "The stream to place the averaged data into.",

"direction": "output"

}

]

}

]

}The manifest file consists of the following properties:

| Property | Description |

|---|---|

baseImage | The ID of the base image to be used for the model(s) declared in the manifest. Note that all the models declared in the manifest are built into a single "model image", and so it is not possible to declare multiple models with different base images in a single manifest. |

organisationId | The ID of a SensorCloud "organisation", corresponding to the organisation that will "own" the models. |

groupIds | (Optional) The ID(s) of a SensorCloud "group", corresponding to the group(s) that will "own" the models. Note: Each group must be part of the owning organisationId. |

entrypoint | The name of the image's "entry point" - the file that is to be executed in order to run the model(s). |

dependencies | A list of third-party dependencies that need to be installed in order for the model(s) to be run (see "Specifying Dependencies" below). |

models | A list of the models that are declared by the manifest (see "Declaring Models" below). |

Specifying Dependencies

As most models will require specialised third-party dependencies to be installed in order to run, the manifest allows these dependencies to be declared so that they may be built into the model image at the time it is built.

Each dependency is declared using a JSON object with the following properties:

Property | Description |

|---|---|

| The name of the dependency package, as registered with the given package provider. For example, the Python Pandas library is named |

| The package manager to use to install the dependency package. At present, the following package managers are supported: |

| An (optional) list of any dependencies which must be resolved before attempting to resolve this dependency. Each value in the list must exactly correspond to the |

Declaring Models

As a convenience, the manifest file format allows multiple models to be declared within a single manifest. This allows many related models to be implemented within a single model image, thereby making optimal re-use of shared code, data and dependencies.

To declare the models that are implemented within the code, the manifest contains a list of model objects in its models property. Each model object has the following properties:

| Property | Description |

|---|---|

id | The model's unique ID. This is the ID that will be used to refer to the model within the Analysis Services API. This ID is also used to discover the model's implementation within the model code. The details of this process are different for each supported model development language, so please refer to the language-specific development guides for more information. |

name | The model's human-friendly name. |

version | The model's version number. |

description | A description of what the model does. |

method | A description of how the model works. |

ports | A list of port objects, describing the model's inputs and outputs (see the following table for details). |

Each model may declare zero or more input and/or output "ports". These serve as placeholders for the model's inputs, outputs and configuration options, and are bound to specific data when a workflow is created for the model (see Creating Workflows for more information).

Ports come in four different types:

- "Stream" ports accept a single SensorCloud stream as input or output.

- "Multi-stream" ports accept multiple SensorCloud streams as input or output.

- "Document" ports accept a text document as input or output.

- "Grid" ports accept a Thredds dataset (specified using a Thredds catalog URL and dataset path) as input.

- "Collection" ports accept any number of Streams, Documents, or Grids as inputs or outputs.

These ports are declared in the model's manifest using a list of port objects in the model's ports property. The properties of a port object are as follows:

| Property | Description |

|---|---|

portName | The port's name, used to identify it among all the ports on the model. |

required | A Boolean flag indicating if a value must be bound to the port in workflows for the model. If true, workflows must define a value for the port, otherwise defining a value for the port is optional. |

type | The port type, one of stream, document or grid, corresponding to the stream, multi-stream, document and grid port types respectively. Collection portscan be declared as either stream[], document[], or grid[]. |

description | A human-friendly description of the port. |

direction | Either input or output, depending on whether the port is an input port or an output port. |

Compute Profiles

Models are provided a guaranteed CPU and RAM allocation when run in the Senaps Analysis Service. At present, the default allocation consists of ¼ of a CPU (time-shared with other processes) and a 256 MB RAM allocation. Depending on load, models may exceed these limits if further resources are available, although this cannot be guaranteed.

To guarantee a higher CPU or memory allocation for your model, you may optionally declare your model to require a certain "compute profile". These profiles, each consisting of a fixed CPU and RAM allocation amount, can be listed at the Analysis Service API's /profiles endpoint.

There are two ways to declare a compute profile for a model:

- With a

profileidproperty at the root of the manifest. The value of this property must be a valid compute profile ID, as listed at the Analysis Service API'sprofilesendpoint. This profile will be applied to all models declared in the manifest, unless overridden on a per-model basis (see the next point). - With a

profileidproperty within a model's declaration in the manifest. If set to a valid compute profile ID, that profile will be applied to the model, overriding the system default and the profile declared at the root of the manifest (if any). Alternatively, if set tonull, the model will be forced to use the system default profile (even if a profile is declared at the manifest level).

If no profile is declared in the manifest, the system default profile is applied.

The following partial manifest snippet demonstrates each of these possibilities:

{

"baseImage": "1132000a-8b05-4229-8cdc-a7b3bd8fa511",

"organisationId": "my_org_id",

"groupIds": [],

"entrypoint": "model.py",

"dependencies": [],

"profileid": "L",

"models": [

{

"id": "model1",

"name": "Model 1",

"version": "0.0.1",

"description": "First example model",

"method": "",

"ports": []

},

{

"id": "model2",

"name": "Model 2",

"version": "0.0.1",

"description": "Second example model",

"method": "",

"profileid": "S",

"ports": []

},

{

"id": "model3",

"name": "Model 3",

"version": "0.0.1",

"description": "Third example model",

"method": "",

"profileid": null,

"ports": []

}

]

}In this case, the model model1 will run with the L profile which was declared at the manifest level, since it wasn't overridden in the model declaration. The model model2 will run with the S profile, as explicitly declared in the model's declaration, overriding the manifest-level declaration. The model model3 will run with the system default profile, as the null profile listed in its declaration overrides that at the manifest level.

Installing Models

Installing a model within the Analysis Services environment consists of uploading a zip file containing the model's manifest, the various code files implementing the model, and any other resource files required by the model. Within that zip file, the manifest must be contained within a file named manifest.json placed at the root of the zip file, but all the other files can be named whatever is convenient (so long as the main model "entrypoint" file is named as declared within the manifest).

The zip file must be uploaded to the Analysis Services environment using the API's POST /models endpoint. This expects the request to be formatted as a HTTP multipart/form-data request, with the zip file contained within the body's archive form part. An example of doing so using the curl command line tool is as follows:

curl -X POST -H "apikey=<your api key>" -F "archive=@/path/to/model/archive.zip" https://senaps.eratos.com/api/analysis/modelsThis uploads the zip file at /path/to/model/archive.zip to the Analysis Services API hosted at https://senaps.eratos.com/api/analysis

Upon performing this upload, the system will extract the files from the zip archive, process and validate the manifest, then build a Docker image containing the model, its associated data files, and any required third-party dependencies.

If successful, the response will contain the details of the newly installed model. For example, the following is an example response from uploading the Python version of the "multivariate mean" tutorial model:

{

"imagesize": 1323,

"_embedded": {

"models": [

{

"id": "multivariate_mean",

"name": "Multivariate Mean",

"version": "0.0.1",

"organisationid": "my_org_id",

"groupids": [],

"method": "Downloads observation data into a Pandas DataFrame, optionally weights each column, computes sum of each row, then divides by the sum of the weights.",

"description": "Computes mean of multiple aligned data streams.",

"_links": {

"self": {

"href": "https://senaps.eratos.com/api/analysis/models/multivariate_mean"

}

}

}

]

}

}This indicates that the installed model consumes approximately 1.3 kB of disk space. It also echos back much of the model's metadata contained within the manifest, and also provides an API URL corresponding to the installed model (https://senaps.eratos.com/api/analysis/models/multivariate_mean in this case). The model is now available to use!

Upload Model to Senaps

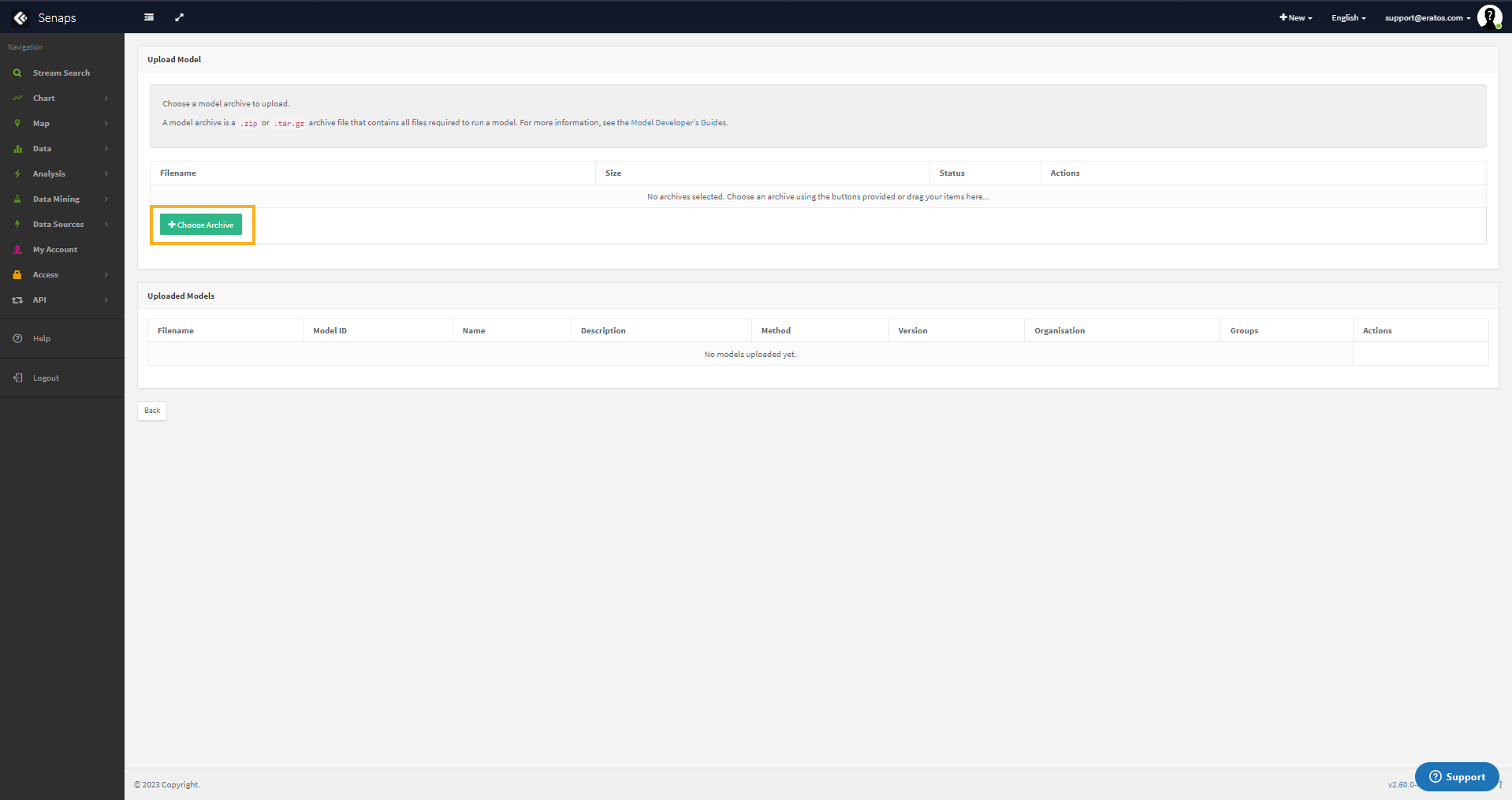

- Zip Manifest and Model file into a zip file

- Head to Senaps Dashboard

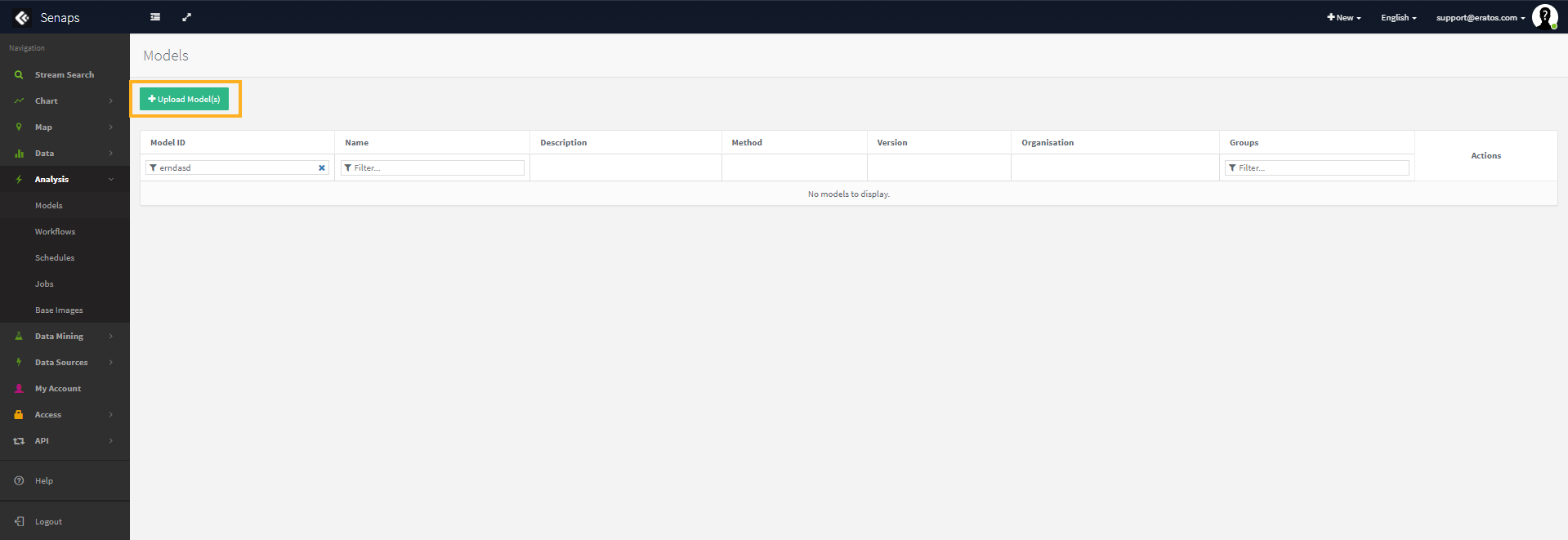

- Click Analysis -> Models in the LHS Menu

- Click Upload Models

- Click Choose Archive, and select the ZIP file you created above

Senaps will then Upload or Fail to upload the model.

This will then allow you to run the model as Workflow and then Schedule that Workflow to run at specific times.

Updated 9 months ago